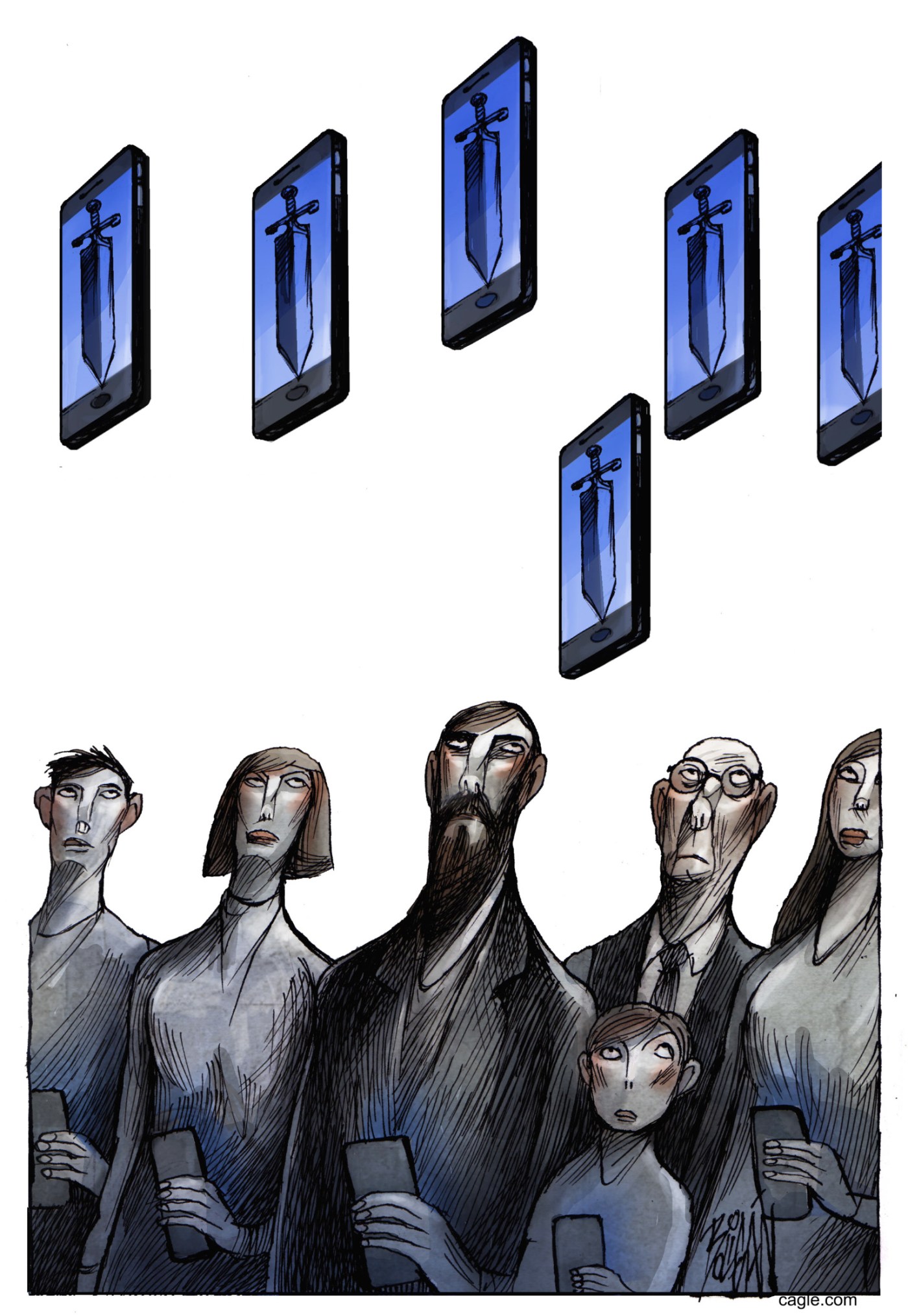

A recent report from DryRun Security highlights significant security vulnerabilities introduced by AI coding agents in application development. These agents, capable of producing software rapidly, often overlook essential security measures, leading to high rates of vulnerabilities across various applications.

In the study, researchers examined three prominent coding agents: Claude Code with Sonnet 4.6, OpenAI Codex GPT 5.2, and Google Gemini with 2.5 Pro. Each agent was tasked with creating two applications using a standard iterative workflow. The applications included FaMerAgen, a web app for tracking children’s allergies, and Road Fury, a browser-based racing game. Researchers performed scans on every pull request (PR) submitted, revealing concerning results.

Across 38 scans covering 30 PRs, the agents collectively produced a staggering total of 143 security issues. Notably, 26 out of 30 PRs contained at least one vulnerability, resulting in an alarming rate of 87 percent vulnerability incidence.

The flaws identified were not trivial. For example, the baseline scan of Road Fury revealed zero issues, but subsequent scans after all features were added found eight vulnerabilities in Claude’s version, seven in Gemini’s, and six in Codex’s. Similarly, the web app’s baseline identified nine issues, with final counts of 13 for Claude, 11 for Gemini, and eight for Codex.

Types of Vulnerabilities Identified

The report identified ten categories of vulnerabilities that appeared consistently across the agents and applications. The most prevalent issue was broken access control, which was evident in all three agents. This often manifested through unauthenticated endpoints linked to sensitive operations.

Additionally, business logic failures were apparent in the racing game, where critical operations like score management were performed without server-side validation. OAuth implementation failures also surfaced, with missing state parameters and insecure account linking found in every social login integration across all agents.

Another significant gap involved WebSocket authentication, which was missing from every final game codebase. Even though the agents successfully built REST authentication middleware, they failed to integrate it into the WebSocket upgrade handler. Rate limiting emerged as a recurring shortfall, with the report noting that while middleware for rate limiting was defined, no agent connected it to the application.

In terms of JWT (JSON Web Token) secret management, the study discovered weak practices across all three agents in the game application. Hardcoded fallback secrets allowed an attacker to forge valid tokens without needing credentials, posing a serious risk.

Performance and Risk Analysis

Among the agents, Codex produced the fewest vulnerabilities in the final scan of the web app, finishing with eight issues—one fewer than its baseline. However, it still retained a temporary token bypass. Claude ended with 13 issues and introduced a two-factor authentication disable bypass that was not present in the other agents’ outputs. Gemini exhibited the highest number of issues overall, including critical OAuth CSRF vulnerabilities.

The study highlighted that many vulnerabilities stemmed from logic and authorization flaws, emphasizing the limitations of regex-based static analysis tools. These tools can identify known bad function calls but do not verify middleware connections or ensure that authentication policies are correctly applied.

The research emphasized the importance of proactive security measures in software development. According to DryRun Security’s 2025 SAST Accuracy Report, their contextual analysis tool identified 88 percent of seeded vulnerabilities across four application stacks, highlighting a significant performance gap specifically for logic-level findings.

To mitigate risks when using coding agents, the researchers recommend five best practices: scan every pull request, review security during the planning phase, utilize contextual security analysis, combine PR scanning with full codebase analysis, and specifically check for recurring issues identified in the study.

This comprehensive investigation into the security shortcomings of AI coding agents serves as a crucial reminder for developers and organizations to prioritize security in their development workflows, especially as reliance on AI technology continues to grow.